Deli Foods is an Emerging Restaurant business with presence all over the United States designs.

They currently have a legacy web Application Written in Java and hosted by their private server : https://project-deliapp.s3.us-east-2.amazonaws.com/DeliApp/src/main/webapp/index.html

It usually takes 5hrs to update their application and updates are manual, which incurs alot of downtime and is affecting their business because clients get locked out which gives their competitors upper hand.

Your Task is to migrate this Application into the cloud and implement Devops Practices to their entire Software Development Life Cycle

You should show concepts that implement Plan --Code--Build--Test--Deploy--Monitor

TASK A - Documentation: Setup a Wiki Server for your Project (Containerization)

a.

You can get the docker-compose file from below link

https://github.com/bitnami/bitnami-docker-dokuwiki/blob/master/docker-compose.yml

Or

Use the below command on your Terminal to get the Yaml code and create a Docker Compose File

curl -sSL https://raw.githubusercontent.com/bitnami/bitnami-docker-dokuwiki/master/docker-compose.yml

b. mount your own Data Volume on this container

Hint: by modifying the Docker Compose file eg.

c. Change default port of Wiki Server to be running on Port 84

d. Change the default User and password

to

Username: DeliApp

Password: admin

hint: Use the official image documentation to find details to accomplish all this

https://github.com/bitnami/bitnami-docker-dokuwiki

TASK A Acceptance Criteria:

i. The Wiki Server should be up and running and serving on 84

ii. Mount your own container volume to persist data

iii. Login with Credentials DeliApp/admin

TASK B: Version Control The DeliApp Project

Plan & Code

App Name: DeliApp

- WorkStation A- Team PathFinders- 3.15.209.165

- WorkStation B - Team Goal Diggers- 3.143.221.53

- WorkStation C- Team Fantastic 4- 3.144.208.46

- WorkStation D- Team PracticeToPerfect- 3.131.152.227

(You can use Github or Bitbucket )-

1) Set up 2 repos, a Build Repo to store all the code base and a Deployment Repo to store all your deployment scripts and name them accordingly as you see below(in green):

- Build repo : DeliApp_Build --->Developers Access

- Deployment repo: DeliApp_Deployment --->-Your Team Access

2)Version control the DeliApp project located in the Developers WorkStation to enable the Developers migrate their code to the Source Control Management Tool(Bitbucket/Git)

- Set up Developers workstations ssh-keys in bitbucket to access Build Repo and Your Team(Devops) workstation ssh-keys in bitbucket to access the Deployment Repo

3)Git branching Strategy for DeliApp_Build

- master

- release: eg release/release-v1

- feature: eg feature/feature-v1

- develop

4)Git branching Strategy for DeliApp_Deploy

- master

- feature eg feature/feature-v1

- develop

TASK B Acceptance Criteria:

1. You should be able to push and pull code from the Developer Workstation assigned to your Team to the DeliApp_Build repo in Source Control Management(SCM)

2. Your Team (Devops) Should be able to pull and push code from your individual workstations to the DeliApp_Deploy repo

3. Demonstrate the git branching Strategy

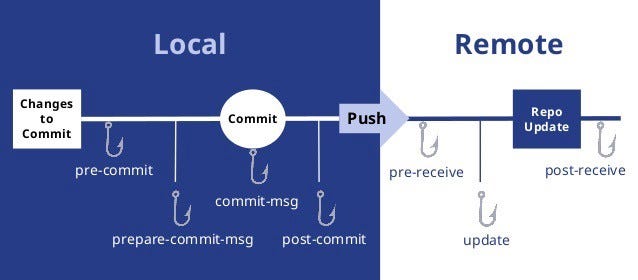

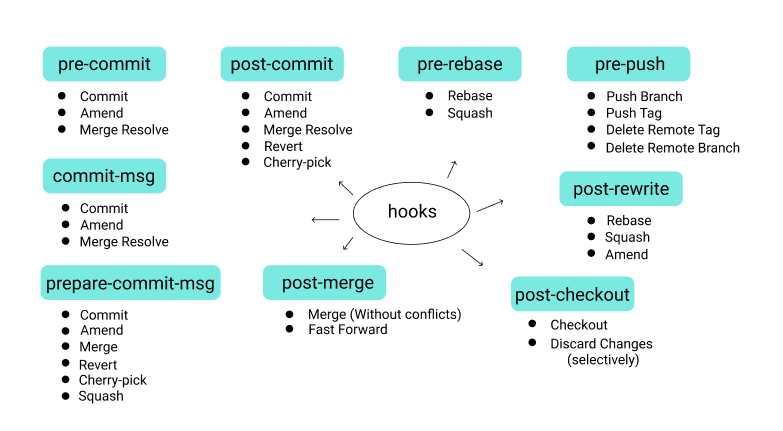

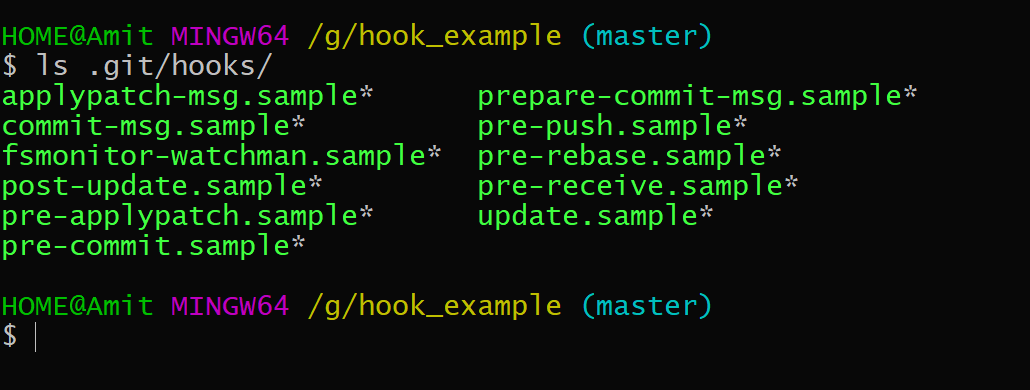

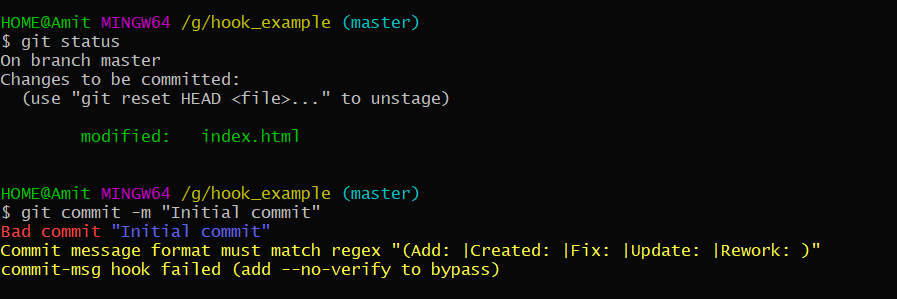

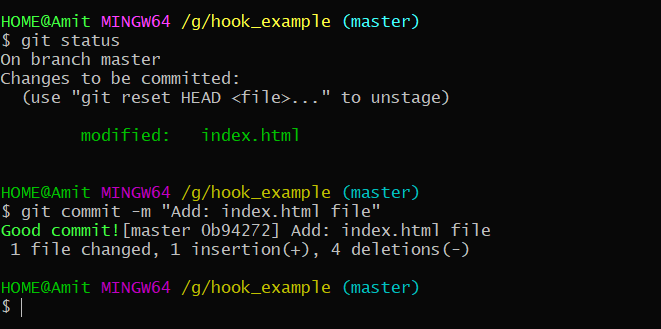

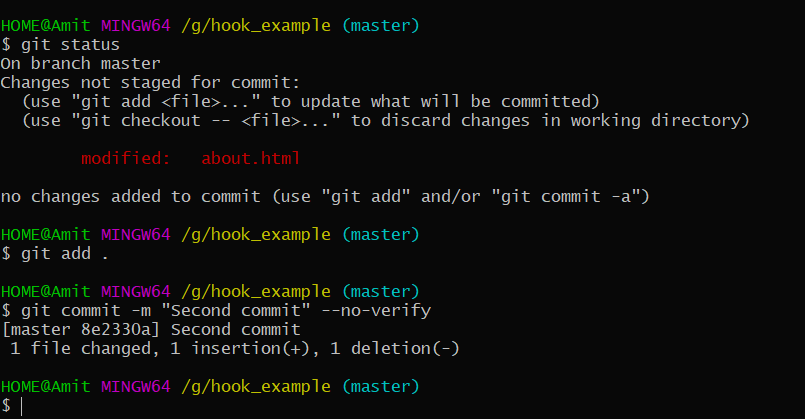

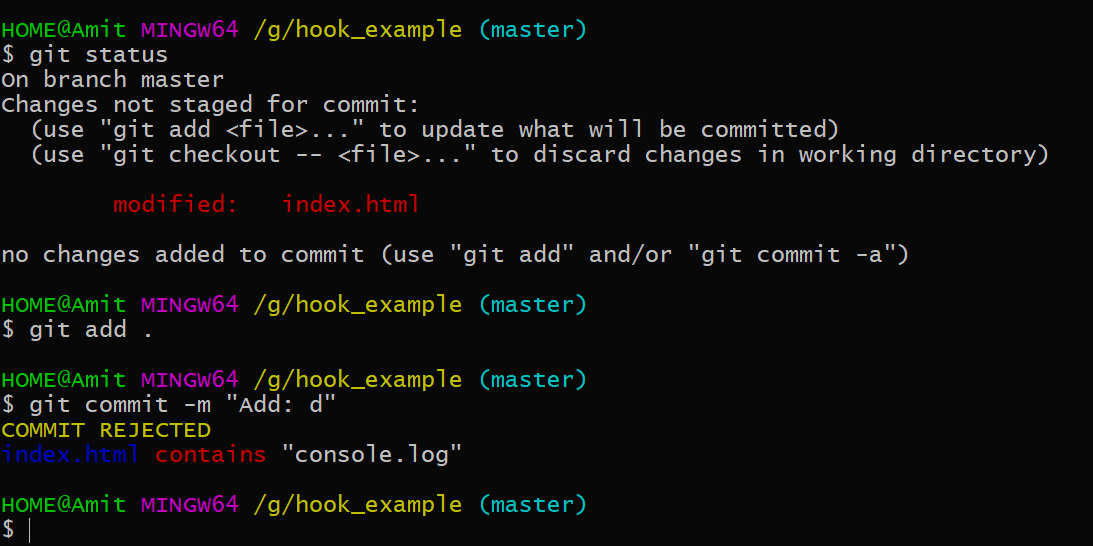

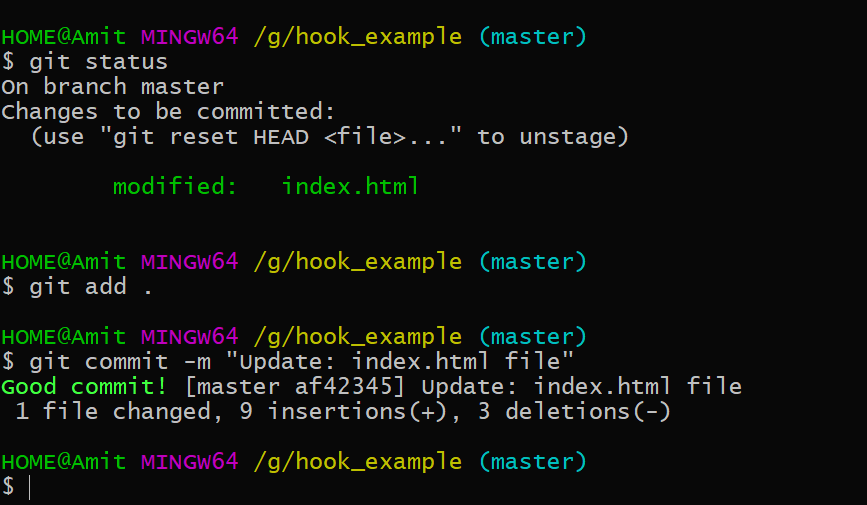

4. Your git commit should should throw an error when there is a secret in your repo

Hint: Add a text file containing some secrets eg. aws secret key/access key and commit

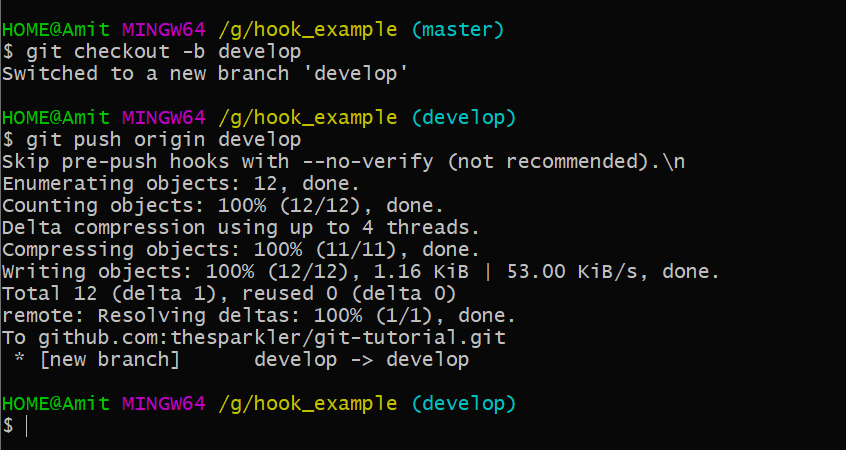

5. You should get an Error when you try to push to master

TASK C: Set up your Infrastructure

1. Set up your Environment: DEV, UAT, QA, PROD A, PROD B

Provision 6 Apache Tomcat Servers (You can use Any IAC Tool(Terraform, Cloud Formation, Ansible Tower)You can host this use any cloud provider - Aws, Google Cloud, Azure

i. DEV - t2micro -8gb

ii. UAT(User Acceptance Testing)- t2small -10gb

iii. QA(Quality Assurance) - T2Large-20gb

iv. PROD A- T2Xlarge-30gb

v. PROD B- T2xLarge-30gb

Apache Tomcat Servers should be exposed on Port 4444

Linux Distribution for Apache Tomcat Servers: Ubuntu 18.04

Note: When Bootstrapping your servers make sure you install the Datadog Agent

2. Set up your Devops tools servers:

(These can be provisioned Manually or with IAC Tool, Be Free to use any Linux Distributions on theses eg Linux 2, Debian, Ubuntu,etc)

1 Jenkins(CI/CD) t2 xlarge 20gb

1 SonarQube(codeAnalysis) t2small 8gb

1 Ansible Tower T2xxl- 15gb

1 Artifactory Server T2xxl - 8gb

1 Vulnerability Scanning Tool Server- Owasp Zap (Install in a Windows instance) See: https://www.devopstreams.com/2022/06/getting-started-with-owasp-zap.html

1 Kubernetes Server-You can use EKS, k3s,kubeadm or minikube(Note your kubernetes can be installed in your Jenkins

TASK D: Monitoring

a. Set up continuous monitoring with Datadog by installing Datadog Agent on all your servers

Acceptance criteria:

i All your infrastructure Sever metrics should be monitored(Infrastructure Monitoring)

ii All running Processes on all your Servers should be monitored(Process monitoring)

ii Tag all your servers on the Datadog dashboard

TASK E: Domain Name System

a. Register a Domain for your Team

i. You can use Route 53, Godaddy or any DNS service of your choice

eg. www.team-excellence.com

TASK F: Set Up Automated Build for Developers

The Developers make use of Maven to Compile the code

a. Set up a C/I Pipeline in Jenkins using Jenkinsfile

b. Enable Webhooks in bitbucket to trigger Automated build to the Pipeline Job

c. The CI Pipeline job should run on an Agent(Slave)

d. Help the developers to version their artifacts, so that each build has a unique artifact version

Pipeline job Name: DeliApp_Build

Pipeline should be able to checkout the code from SCM and build using Maven build tool, Provide code analysis ,codecoverage with sonarqube and upload artifacts to artifactory, and also send email to the team and provide versioning of artifacts

Pipeline should have slack channel notification to notify build status

i. Acceptance Criteria:

Automated build after code is pushed to the repository

1. Sonar Analysis on the sonarqube server

2. Artifact uploaded to artifactory

3. Email notification on success or failure

4. Slack Channel Notification

5. Each artifact has a unique version number

6. Code coverage displayed

TASK G: Deploy & Operate (Continous Deployment)

a. Set up a C/D pipeline in Jenkins using Jenkinsfile

create 4 CD pipeline jobs for each env (Dev,Uat, QA,Prod) or 1 pipeline that can select any of the 4 enviroments

Pipeline job Name:eg DeliApp_Dev_Deploy

i. Pipeline should be able to deploy any of your LLE (Dev, Uat, Qa) or HLE (Prod A, PROD B)

You can use DeploytoContainer plugin in jenkins or Deploy using Ansible Tower to pull artifact from artifactory and deploy to either Dev, Uat , Qa or Prod

ii. Pipeline should have slack channel notification to notify deployment status

iii. Pipeline should have email notification

iv. Deployment Gate

1. Acceptance criteria:

i. Deployment is seen and verified in either Dev, Uat, Qa or Prod

ii. Notification is seen in slack channel

iii. Email notification

TASK H:a. Deployment and Rollback

a. Automate the manual deployment of a Specific Version of the Deli Application using Ansible Tower

Manual Deployment Process is Below:

step 1: login to tomcat server

step 2 :download the artifact

step 3: switch to root

step 4: extract the artifact to Deployment folder

Deployment folder: /var/lib/tomcat8/webapps

Use service id : ubuntu

Acceptance Criteria:

i. Deploy new artifact from artifactory to either Dev, Uat, Qa or Prod

ii. Rollback to an older artfact from Artifactory either to Dev, Uat, Qa or Prod

iii. All credentials should be encrypted

TASK H:b. Domain Name Service and LoadBalancing

i. Add an application or Elastic Loadbalancer to manage traffic between your ProdA and Prod B Servers

ii. Configure your DNS with Route 53 such that if you enter your domain eg www.team-excellence.com it direct you to the LoadBalancer that will inturn point to Prod A or Prod B

Acceptance criteria:

i. Your team domain name eg www.mint.com will take you to your application that is residing on Prod A or Prod B

TASK I:

a. Set Up A 3 Node kubernetes Cluster(Container Orchestration) with Namespace dev,qa,prod

- Using a Jenkins pipeline or Jenkins Job -The pipeline or job should be able to Create/Delete the cluster

b. Dockerize the DeliApp

- You can use a Dockerfile to create the image or Openshift Source to image tool

1. You should be able to create/delete a kubernetes cluster

2. Be able to deploy your application into any Namespace(Dev,Qa,Prod)

3. You should be able to access the application through Nodeport or LoadBalancer

4. You should be able to monitor your cluster in Grafana

TASK J: Demonstrate Bash Automation of

i. Tomcat

ii. jenkins

iii. Apache

Acceptance criteria:

1. Show bash scripts and successfully execute them